Portfolio

Semantic Deduplication System for Real-Time News Aggregation

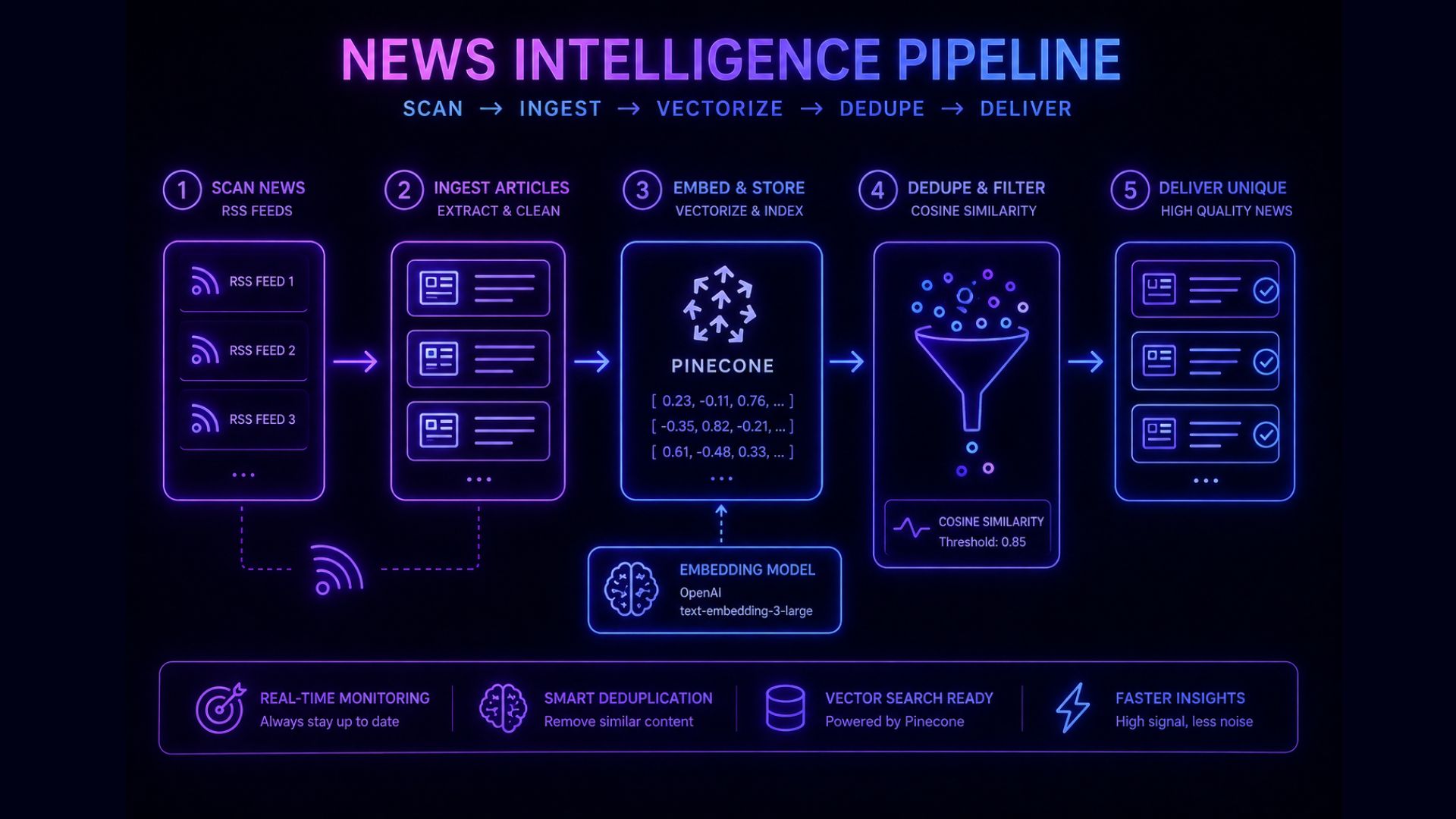

Designed a real-time content pipeline that uses vector embeddings and similarity search to detect duplicate news articles at a semantic level. This enabled accurate deduplication across sources where traditional URL-based methods fail.

Overview

This project involved building a real-time news aggregation system for a UAE-based client (name undisclosed), focused on sourcing and processing articles from multiple global and regional news sources.

A key requirement was ensuring that duplicate content was not stored in the system, even when the same article appeared across different publications with unique URLs.

The Challenge

- Identical or near-identical articles published across multiple sources

- Different URLs for the same content made traditional deduplication ineffective

- High volume of incoming articles requiring real-time processing

- Need to maintain a clean, non-redundant content database

The core challenge was to move from URL-based filtering to content-level, semantic deduplication.

Approach

Real-Time Ingestion Pipeline

Designed a system to continuously ingest news articles from multiple sources in real time.

Embedding Generation

Converted each article into vector embeddings using OpenAI’s embedding models, enabling semantic representation of content.

Vector Database Integration

Stored embeddings in Pinecone, allowing efficient similarity search across a growing dataset of articles.

Semantic Similarity Detection

Implemented similarity search (e.g. cosine similarity) to compare new articles against existing ones and identify duplicates based on content, not URLs.

Threshold-Based Filtering

Applied a configurable similarity threshold to determine whether an incoming article should be stored or discarded.

What Was Implemented

Outcome

The result was a semantic deduplication system that ensures only unique, high-quality content enters the database, even when sourced from multiple overlapping publishers.

Engagement

Worked with a Bangalore based agency for a UAE-based account (name undisclosed) to design and implement the system, focusing on building a scalable and efficient content pipeline.

The solution was designed to handle increasing data volumes while maintaining high accuracy in content filtering and deduplication.